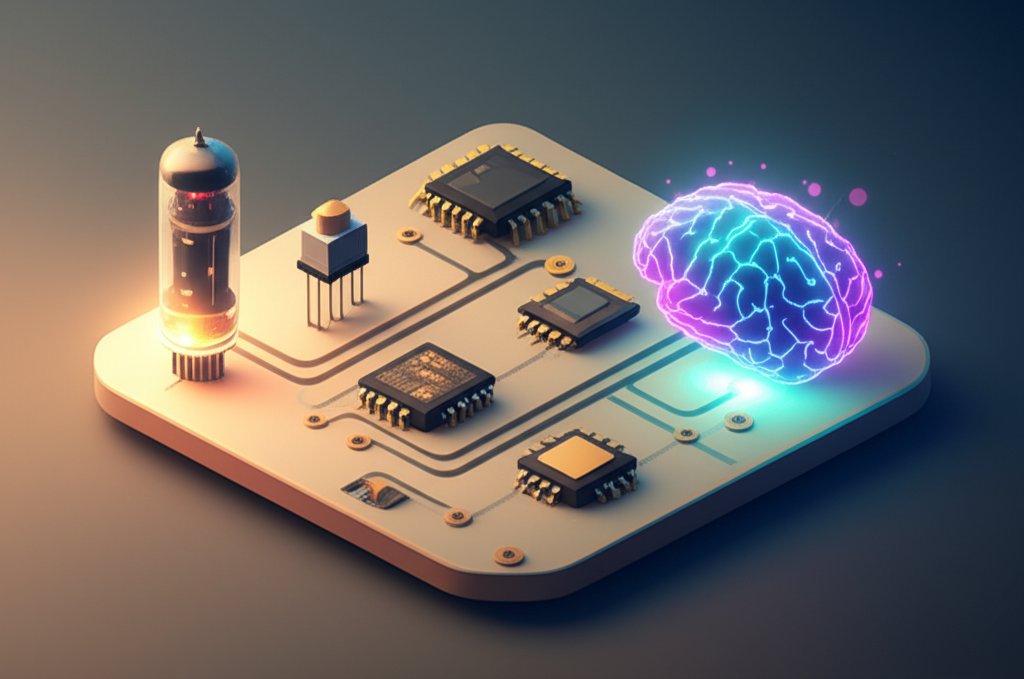

Embark on an extraordinary journey through the profound tapestry of computer history, tracing the incredible evolution from humanity’s earliest attempts at calculation to the nascent intelligence of Artificial Intelligence. This comprehensive article delves into the pivotal moments, brilliant minds, and groundbreaking inventions that have collectively shaped the very fabric of our digital world. Prepare to explore the history of computing like never before, witnessing not just technological shifts, but profound transformations that continue to redefine human potential, from the room-sized ENIAC to the omnipresent AI powering our modern lives.

The Ancient Roots of Calculation: From Abacus to Analytical Genius

Before electricity hummed, the relentless human desire to automate calculation spurred centuries of ingenious inventions. The very foundations of modern computing were laid in an era defined by gears, levers, and punched cards, setting the stage for the dramatic shifts that would follow. This foundational period gave birth to many early computers in conceptual or mechanical forms.

The advancements spearheaded by these pioneers, like Babbage, spurred later innovations, many of which are detailed in these interesting facts about technology, showcasing the long and winding road to modern computing.

Early Tools: Counting to Complex Operations

Humanity’s quest for easier calculation began with simple devices. The abacus, used for millennia across diverse cultures, stands as one of the oldest and most enduring calculating tools. However, the 17th century marked a significant leap with the advent of mechanical calculators. In 1642, French polymath Blaise Pascal invented the Pascaline, one of the first mechanical calculators capable of performing addition and subtraction. Decades later, Gottfried Leibniz, a German mathematician and philosopher, improved upon this concept with his Stepped Reckoner (circa 1672), which could also perform multiplication and division through a more complex system of gears.

Charles Babbage and Ada Lovelace: Visionaries Ahead of Their Time

The true visionary of this mechanical era was Charles Babbage, a British mathematician and inventor. In the 19th century, Babbage conceived of the Difference Engine (starting 1822), designed to automate the calculation of polynomial functions for navigation tables, crucial for maritime travel. More remarkably, he then envisioned the Analytical Engine (beginning around 1837), a general-purpose mechanical computer. His designs contained almost all the logical elements of a modern digital computer: an arithmetic logic unit (the “mill”), conditional branching, loops, and integrated memory. Although never fully built in his lifetime due to funding and manufacturing limitations, Babbage’s designs laid a conceptual blueprint for almost all subsequent computational endeavors, marking a monumental milestone in computer history.

Crucial to Babbage’s Analytical Engine was the concept of programming. It was Ada Lovelace, daughter of Lord Byron and an accomplished mathematician, who recognized the machine’s potential beyond mere number crunching. She wrote what is widely considered the first algorithm intended to be carried out by a machine, demonstrating how the Analytical Engine could go beyond simple arithmetic to perform complex sequences of operations. Lovelace’s profound foresight into the machine’s ability to manipulate symbols, not just numbers, established a theoretical framework for software, long before hardware caught up. Her contributions underscore the intellectual depth required for computer evolution even at its earliest stages.

Hollerith and Punched Cards: The Electromechanical Era Begins

Towards the end of the 19th century, Herman Hollerith made a significant practical breakthrough. Tasked with processing the overwhelming data of the 1890 US Census, he developed a system of punched cards to store data and an electromechanical tabulating machine to read and process them. This invention dramatically reduced census processing time from years to months, proving the efficiency and scalability of automated data handling. Hollerith’s company, founded in 1896, would eventually become International Business Machines (IBM), a titan in the burgeoning history of computing. His work showcased an early technological transformation in how large datasets could be managed, a direct precursor to modern data processing techniques.

The Dawn of Electronic Computing: War, Breakthroughs, and Stored Programs

The 20th century unleashed an unprecedented surge of innovation, pivoting from mechanical and electromechanical systems to the speed and efficiency offered by electronics. This era fundamentally defines electronic computer history, laying the groundwork for everything we know today. The urgency of global conflicts propelled the development of first-generation early computers.

World War II’s Catalyst: Codebreaking and the First Electronic Computers

World War II acted as a powerful accelerator for computing innovation. The urgent need for complex calculations – for ballistics trajectories, codebreaking, and scientific research – pushed engineers and scientists to develop faster, more reliable machines.

In Britain, the Colossus computers were developed by Tommy Flowers and his team at Bletchley Park. These machines, specifically designed for deciphering encrypted German communications (Lorenz cipher), were the world’s first electronic digital programmable computers. Operating in secret, Colossus machines used thousands of vacuum tubes to perform logical operations at speeds unimaginable for mechanical devices. Their existence remained classified for decades, obscuring their pivotal role in the history of computing.

Across the Atlantic, in the United States, the Electronic Numerical Integrator and Computer (ENIAC) was completed in 1946 by J. Presper Eckert and John Mauchly at the University of Pennsylvania. ENIAC is famously recognized as the first general-purpose electronic digital computer. It weighed 30 tons, occupied 1,800 square feet, and contained over 17,000 vacuum tubes, consuming 150 kW of power. Despite its immense size and power consumption, ENIAC could perform 5,000 additions per second, representing a colossal leap in computational power and marking a monumental milestone in computer history. Its public debut heralded the true dawn of the digital age.

The Von Neumann Architecture: Stored Programs and Modern Design

While ENIAC was revolutionary, it required extensive manual re-wiring to change programs, making it cumbersome and slow to reconfigure. A critical conceptual leap came with the idea of the “stored-program computer,” primarily articulated by John von Neumann in his influential 1945 “First Draft of a Report on the EDVAC.” The von Neumann architecture proposed that both the program instructions and the data being processed could be stored in the same memory unit. This allowed for much greater flexibility and eliminated the need for manual re-wiring between tasks, making computers truly programmable in the modern sense.

The first computer to fully implement the stored-program concept was the Small-Scale Experimental Machine (SSEM), nicknamed “Baby,” in Manchester, England, in 1948, followed by the Manchester Mark 1 and the Electronic Delay Storage Automatic Calculator (EDSAC) in Cambridge in 1949. This architectural model became the fundamental design principle for nearly all subsequent computers, a foundational computer history milestone that continues to influence system design today.

First Commercial Computers: UNIVAC and IBM’s Entry

The decade following ENIAC saw the production of the first commercial electronic computers, serving government agencies and large corporations. The UNIVAC I (Universal Automatic Computer I), designed by Eckert and Mauchly, was the first commercial computer produced in the United States. Its most famous early application was predicting the outcome of the 1952 US presidential election for CBS, a public demonstration of computing’s potential that stunned audiences and brought computing into the public consciousness.

IBM, leveraging its expertise from Hollerith’s tabulating machines, quickly entered the electronic computer market. The IBM 701, released in 1952, was its first electronic stored-program computer, followed by a series of successful mainframe computers like the IBM 704 and 7090. These powerful machines, characterized by their reliance on vacuum tubes for processing, massive size, and high cost, dominated corporate and government computing throughout the 1950s. They were central to advancing scientific research, defense calculations, and large-scale business data processing, solidifying the early impact of electronic computer history on modern society. These robust systems signify a crucial phase in computer evolution.

The Transistor Revolution: Scaling Down, Powering Up

The bulky, hot, and unreliable nature of vacuum tubes created a significant bottleneck for further advancement in computer evolution. The invention of the transistor ushered in an era of unprecedented miniaturization, efficiency, and reliability, igniting the history technological transformations that would eventually bring computers to every home and pocket.

Transistors: The Tiny Giant that Remade Electronics

In 1947, at Bell Telephone Laboratories, John Bardeen, Walter Brattain, and William Shockley invented the transistor. This tiny semiconductor device could amplify and switch electronic signals with far greater efficiency, reliability, and less heat than vacuum tubes. It consumed significantly less power and was dramatically smaller and cheaper to produce.

The transistor’s impact was immediate and profound. It led to the “second generation” of computers, which were smaller, faster, more energy-efficient, and more reliable than their vacuum-tube predecessors. This invention is arguably the single most important computer history milestone for enabling the widespread adoption and portability of electronic devices. Without it, the personal computer revolution, and indeed the digital age as we know it, would have been impossible.

Integrated Circuits: The Birth of Microelectronics

Building on the transistor, the next major breakthrough came in 1958 with the independent invention of the integrated circuit (IC) by Jack Kilby at Texas Instruments and Robert Noyce at Fairchild Semiconductor. An IC combines multiple transistors, resistors, and capacitors onto a single, small semiconductor chip. This innovation allowed for an exponential increase in component density while further reducing size, cost, and power consumption.

The integrated circuit became the cornerstone of the “third generation” of computers, notably exemplified by IBM’s System/360 in 1964. These machines were not only more powerful but also more affordable and versatile, capable of handling a wider range of applications. The IC’s development solidified the trajectory towards miniaturization and higher performance, an essential milestone in computer history for scalable manufacturing and complex system design.

The Microprocessor: Intel’s Game-Changer and the Fourth Generation

The culmination of transistor and integrated circuit technology arrived with the invention of the microprocessor. In 1971, Intel released the 4004, the first commercially available single-chip microprocessor. Designed by Federico Faggin, Ted Hoff, and Stanley Mazor, this tiny chip contained 2,300 transistors and could perform calculations that previously required an entire room full of electronics.

The microprocessor meant that the central processing unit (CPU) of a computer could be placed on a single chip. This innovation drastically reduced the cost and size of computing power, making it accessible to a much wider range of applications and devices beyond mainframes. The Intel 4004 was a monumental computer history milestone, directly paving the way for desktop calculators, embedded systems, and, most importantly, the personal computer revolution, defining the “fourth generation” of computing.

The Personal Computer Era & Global Connectivity: Computing for the Masses

With miniaturization and drastically reduced costs, computing power began to escape the confines of government labs and corporate data centers. This era marks a critical phase in history technological transformations, shifting computing from specialized, expensive tools to everyday utilities, accessible to everyone. This period dramatically accelerated computer evolution.

The Rise of Personal Computers: Apple, IBM PC, and the Home Computing Boom

The 1970s saw the emergence of hobbyist computers, such as the Altair 8800, but it was in the late 1970s and early 1980s that personal computers (PCs) truly took off. Companies like Apple, Commodore, and Tandy introduced machines that could be bought by individuals for home and small business use. The Apple II, released in 1977, was particularly influential, offering color graphics and expansion slots that made it popular for games, educational software, and early spreadsheets.

The landscape changed dramatically with the introduction of the IBM Personal Computer (IBM PC) in 1981. While not the first PC, IBM’s established brand name, robust marketing, open architecture (using off-the-shelf components), and adoption of Microsoft’s MS-DOS operating system created a new industry standard. This milestone in computer history led to a proliferation of “IBM PC compatible” machines, sparking intense competition and rapid innovation that drove down prices and expanded market reach. Computing was no longer just for experts; it was for everyone, accelerating domestic and business computer evolution.

Graphical User Interfaces (GUIs): Making Computers Accessible

Early personal computers relied on command-line interfaces, requiring users to type specific, often cryptic commands. This presented a significant barrier for many potential users. The development of the Graphical User Interface (GUI) was a game-changer, making computers intuitive and user-friendly. Pioneered at Xerox PARC in the 1970s by researchers like Alan Kay and Douglas Engelbart, the GUI used icons, windows, and a mouse, allowing users to interact with the computer visually and directly.

Apple brought the GUI to the mainstream with the Macintosh in 1984. Its iconic “1984” Super Bowl commercial and user-friendly design revolutionized how people perceived and interacted with computers. Though initially expensive, the Macintosh’s innovative interface set a new standard. Microsoft, seeing the potential, released its own GUI-based operating system, Windows, in 1985. Windows gradually gained dominance, becoming the de facto standard for PCs worldwide and making complex computing accessible to billions. The GUI is a profound computer history milestone for democratizing technology.

The World Wide Web: Connecting Humanity

While the internet (ARPANET) had existed since the late 1960s as a network for researchers and academics, it was the World Wide Web that truly made global connectivity a reality for the masses. In 1989, Tim Berners-Lee, a scientist at CERN (the European Organization for Nuclear Research), proposed a system for sharing information via hypertext documents accessible over the internet. By 1991, the first website was online, and by 1993, the release of the Mosaic web browser (followed by Netscape Navigator and Internet Explorer) made navigating the web easy and intuitive for non-technical users.

The World Wide Web ushered in an unprecedented era of information sharing, communication, and commerce. It transformed industries, created new global communities, and fundamentally altered how we access knowledge and interact with each other. This is arguably one of the most significant history technological transformations of our time, creating the interconnected digital world we live in today.

Mobile Computing: Ubiquitous Access and the Smartphone Revolution

The late 20th and early 21st centuries witnessed another dramatic shift: the move towards mobile computing, ushering in another stage of computer evolution. Laptops became commonplace, followed by personal digital assistants (PDAs) like the PalmPilot and early smartphones like BlackBerry. However, the true revolution came with devices like the Apple iPhone (2007) and the subsequent explosion of Android devices. These smartphones seamlessly integrated powerful computing, high-resolution cameras, GPS, and internet access into a single, pocket-sized device.

This mobile milestone in computer history meant computing power was no longer confined to desktops or even laptops. It was ubiquitous, always on, and always connected, enabling new forms of communication, entertainment, and productivity. The app economy flourished, turning smartphones into essential tools for daily life and deepening the impact of the history of computing on individuals worldwide.

The Age of Artificial Intelligence: Mimicking Minds, Shaping Futures

As the previous waves of computer evolution matured, the focus shifted from raw processing power to making machines “smarter”. The concept of intelligent machines, or Artificial Intelligence (AI), has been a profound aspiration throughout computer history, and in the 21st century, it moved from science fiction to everyday reality. Understanding the history of AI is crucial to comprehending modern computing.

From Conceptualization to Early Dreams

The concept of intelligent machines dates back to classical antiquity, but its modern scientific pursuit began in earnest in the mid-20th century. Alan Turing, with his seminal 1950 paper “Computing Machinery and Intelligence,” proposed the “Imitation Game” (now known as the Turing Test) as a criterion for machine intelligence, laying a foundational concept for the history of AI. The term “Artificial Intelligence” itself was coined in 1956 by John McCarthy at the Dartmouth Conference, marking the official birth of AI as a field of study.

Early AI research, often funded by the US Department of Defense, focused on symbolic reasoning, expert systems, and problem-solving through logical rules. Programs like ELIZA (1966) could mimic conversation, while SHRDLU (1972) could understand and respond to natural language commands within a limited “blocks world.” However, these systems were largely rule-based and struggled with the complexities of real-world knowledge and common sense, leading to periods dubbed “AI winters” due to reduced funding and enthusiasm.

The Resurgence of AI: Data, Algorithms, and Computational Power

The 21st century has seen a dramatic resurgence of AI, fueled by several key factors:

This convergence led to breakthroughs in areas like image recognition, natural language processing, and game playing. IBM’s Deep Blue defeated chess world champion Garry Kasparov in 1997, and Google’s AlphaGo defeated Go world champion Lee Sedol in 2016, showcasing AI’s growing prowess in complex strategic tasks.

Modern AI: Deep Learning and Large Language Models

Today, AI powers everything from personalized recommendations and voice assistants (Siri, Alexa) to medical diagnostics, fraud detection, and autonomous vehicles. Systems like large language models (e.g., Google’s LaMDA, OpenAI’s GPT series) represent a significant milestone in computer history, demonstrating unprecedented abilities to understand, generate, and manipulate human language. These models can write code, compose music, answer complex questions, and even engage in creative writing, profoundly altering human-computer interaction.

AI is not just a tool; it’s an evolving intelligence that promises to automate, augment, and fundamentally alter almost every aspect of society, driving the next wave of technological transformations. The ongoing development of artificial general intelligence (AGI) and superintelligence remains a significant area of research and debate, highlighting the revolutionary nature of the history of AI.

Beyond Tomorrow: Quantum, Edge, and the Next Frontier

As we stand in the present, the pace of innovation shows no signs of slowing. New frontiers are emerging, promising even more profound history technological transformations that will continue to reshape our world, with Quantum Computing and advanced integrations at the forefront of computer evolution.

Quantum Computing: Beyond Binary Limits

Classical computers use bits, which represent information as either a 0 or a 1. Quantum computers, however, use “qubits,” which leverage quantum mechanics principles like superposition (being both 0 and 1 simultaneously) and entanglement (qubits being inextricably linked). This allows quantum computers to perform certain types of calculations at exponentially faster rates than even the most powerful supercomputers for specific problem sets.

Still in its early stages of development, quantum computing has the potential to revolutionize fields like drug discovery, material science, financial modeling, cryptography (both for breaking and creating new secure methods), and complex optimization problems. It could solve problems currently intractable for classical machines. While practical, stable, and error-corrected quantum computers are still years away, every breakthrough represents a significant computer history milestone on the path to unlocking a new dimension of computational power.

Emerging Frontiers: Edge Computing, Biotech Integration, and The Metaverse

Beyond AI and quantum computing, several other areas promise future milestones in computer history:

- Edge Computing: This paradigm shifts data processing closer to its source (e.g., on smart devices, IoT sensors) rather than sending it all to a central cloud. This reduces latency, saves bandwidth, and enhances privacy, crucial for real-time applications like autonomous vehicles, smart factories, and augmented reality.

- Biotech Integration: The convergence of computing with biology is leading to breakthroughs from rapid DNA sequencing and personalized medicine to advanced prosthetics and brain-computer interfaces (BCIs). BCIs could someday allow direct communication between the human brain and external devices, blurring the lines between biological and artificial intelligence.

- The Metaverse: Envisioned as a persistent, interconnected virtual world, the metaverse aims to blur the lines between physical and digital realities. Driven by advanced graphics, networking, blockchain technology, and immersive technologies like virtual and augmented reality, it promises new forms of social interaction, commerce, and entertainment.

- Sustainable Computing: As computing power grows, so does its environmental footprint. Future innovations will focus on energy-efficient hardware, green data centers, and new, less resource-intensive methods of data processing and storage.

- Cybersecurity Advancements: With increasing connectivity, the sophistication of cyber threats escalates. The future of computing will heavily rely on advanced AI-driven cybersecurity systems, quantum-resistant encryption, and robust decentralized security architectures to protect our digital infrastructure.

These emerging fields highlight that the history of computing is not a closed book but an ongoing saga of continuous innovation and profound technological transformations. The future promises an interconnected, intelligent, and increasingly intertwined digital and physical existence as computer evolution continues its relentless march.

Generations of Computers: A Quick Overview

To better understand the computer evolution, it’s often categorized into “generations,” primarily based on the core electronic component used:

- First Generation (1940s-1950s): Vacuum Tubes.

- Characteristics: Massive size, high power consumption, generated a lot of heat, prone to failure.

- Examples: ENIAC, UNIVAC I.

- Programming: Machine language, often requiring complex wiring.

- Second Generation (1950s-1960s): Transistors.

- Characteristics: Smaller, faster, more reliable, less heat and power than vacuum tubes.

- Examples: IBM 7000 series.

- Programming: Assembly language, early high-level languages (FORTRAN, COBOL).

- Third Generation (1960s-1970s): Integrated Circuits (ICs).

- Characteristics: Even smaller, faster, more efficient. Marked the beginning of microelectronics.

- Examples: IBM System/360.

- Programming: Operating systems, widespread use of high-level languages.

- Fourth Generation (1970s-Present): Microprocessors.

- Characteristics: Single chip containing the CPU, leading to personal computers and the internet. Dramatic decrease in size and cost.

- Examples: Apple II, IBM PC, modern desktops, laptops, smartphones.

- Programming: GUIs, object-oriented programming, network operating systems, web development.

- Fifth Generation (Present & Future): Artificial Intelligence & Advanced Architectures.

- Characteristics: Focus on parallel processing, AI, quantum computing, natural language processing, expert systems.

- Examples: AI-powered systems, supercomputers, early quantum computers, neural networks.

- Programming: Machine learning frameworks, specialized AI languages, massive data processing.

Conclusion

From the mechanical gears of Babbage’s Analytical Engine to the intricate neural networks of today’s Artificial Intelligence, the milestones in computer history represent a testament to human ingenuity and the relentless pursuit of progress. We have witnessed an astonishing computer evolution from room-sized vacuum-tube machines to pocket-sized supercomputers, each step marking a profound technological transformation.

The journey, starting with the very first electronic computer history moments like ENIAC and advancing through the transistor revolution, the democratization of personal computing, the global connectivity of the World Wide Web, and the mobile revolution, has reshaped industries, societies, and individual lives. As we look towards the future, promising breakthroughs in AI, quantum computing, and other emerging frontiers suggest that the most extraordinary chapters in the history of computing may still be yet to be written. The digital age is not merely an era; it’s a dynamic, ever-unfolding narrative of human progress, constantly pushing the boundaries of what’s possible and making the impossible, achievable. Dive deeper into this incredible timeline and continue to explore the marvels of human innovation!

FAQ

Q1: What is considered the earliest conceptual “computer” or calculating device?

A1: While the abacus is an ancient calculating tool, Charles Babbage’s Analytical Engine (designed in the 1830s) is widely considered the conceptual predecessor to the modern general-purpose computer, outlining many of its core logical components and programming concepts thanks to Ada Lovelace.

Q2: When did electronic computers first emerge, and what was a key early example?

A2: Electronic computers emerged during and immediately after World War II. The ENIAC (Electronic Numerical Integrator and Computer), completed in 1946, is a key early public example, recognized as the first general-purpose electronic digital computer, though the secret Colossus machines preceded it in specific codebreaking tasks during WWII.

Q3: How did the transistor revolutionize computer history and computer evolution?

A3: The transistor, invented in 1947, revolutionized computer history by replacing bulky, hot, and unreliable vacuum tubes. Its smaller size, lower power consumption, and increased reliability enabled the miniaturization of electronic devices, paving the way for integrated circuits, microprocessors, and ultimately, the widespread adoption of personal computers and mobile devices, drastically accelerating computer evolution.

Q4: What was the significance of the World Wide Web in the history of computing?

A4: The World Wide Web, conceived by Tim Berners-Lee in 1989, transformed the Internet from a niche network for researchers into a global platform for information sharing, communication, and commerce. It made computing and information accessible to billions worldwide through easy-to-use web browsers, fundamentally changing society.

Q5: What are the primary differences between classical computers and quantum computers?

A5: Classical computers use bits (0 or 1) to process information sequentially, while quantum computers use qubits, which can exist in multiple states simultaneously (superposition) and be entangled. This allows quantum computers to perform certain complex calculations exponentially faster for specific types of problems, opening doors to solving currently intractable problems.

Q6: How has Artificial Intelligence contributed to recent technological transformations, particularly in the history of AI?

A6: Artificial Intelligence, particularly with advancements in machine learning and deep learning, has driven significant transformations by enabling machines to learn, reason, and make decisions. This has led to innovations in areas like natural language processing (e.g., large language models), image recognition, personalized recommendations, and automation across industries, marking a pivotal new chapter in the history of AI.

Q7: What role did early computers play in World War II?

A7: Early computers like the British Colossus machines and the American ENIAC played a crucial role in World War II by performing complex calculations vital for the war effort. Colossus was used for codebreaking encrypted enemy communications, while ENIAC was initially designed to calculate ballistics trajectories, demonstrating the critical need for rapid computation in wartime.